Table of Content

I was just reading this news on TechCrunch that many Venture Capitalists are in the belief that the cloud market is now mature to its saturation level. Most of the startups are now opting out of it as they are free from their Incubator stage. There has been a trend observed of the ever-increasing cloud infrastructure moving back to the edge of the local connections. Is it because private cloud being more reliable but less affordable and public cloud is prone to get attacked. But was cloud designed to decline in the near future? I don’t think so.

Hyperscale Storage

Before understanding hyperscale data centers, let’s get acquainted with hyperscale storage. So hyperscale storage is not so hyper as it may be assumed of just because of its name. But it has been confusing many IT developers for so long. There is one more misconception that hyperscale storage is designed especially for large infrastructures like LinkedIn, Amazon, Netflix, etc. With hyperscale storage comes “hyper-convergence”. Hyper convergence provides leverage to the infrastructure to scale independently. I know it may be sounding very confusing, but miraculous technologies cannot be understood so easily. Some data-intensive workloads like Hadoop, Elastic etc. require Direct Attached Storage (DAS) as a default storage infrastructure. These are the problems that big shot cloud hosting providers have to face on a daily basis. As the enterprises with lower budgets assigned on server count and are never in the state to justify over expenditure on another rack of servers.

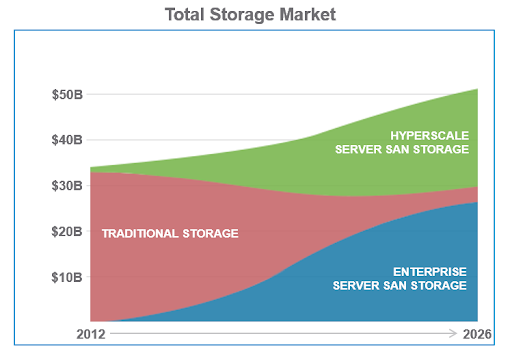

See the increase in the demand of SAN storage and steep decrease in the traditional kind of storage… Till 2026, most of the traditional storage would vanish out from the market and will be taken over by hyperscale storage…

But with DAS scaling out data-intensive workloads is not big of a task. Its functionality is quite different from the traditional shared storages like SAN, NAS etc. With these conventional storage solutions comes network latency and is responsible for creating bottleneck impact on the workloads.

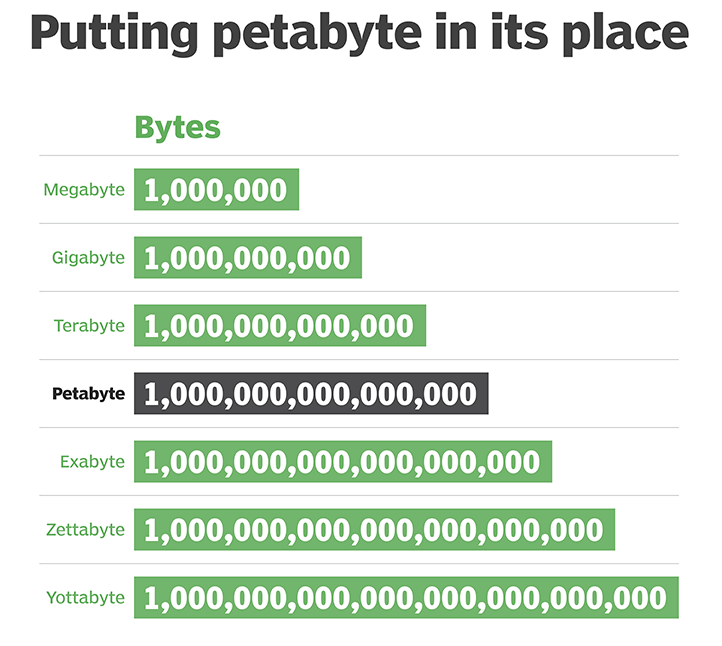

So talking in a layman language, hyperscale storage is capable of increasing size, efficiency and performance rapidly. The capacity of a hyperscale storage solution is generally measured in “Petabytes”.

Hyperscale storage deals in Petabyte for now but the pace at which data is increasing exponentially, in no time it may reach up to 24 zero level…

So the major difference between hyperscale and a conventional storage solution are-

- Storage in petabytes instead of a terabyte.

- Able to serve its users with a limited number of applications also unlike conventional storage where there more less users but more applications.

- Hyperscale storage has fewer features as compared to conventional storage solutions as it focuses more on maximizing raw storage at minimum cost.

- Hyperscale storage entertains even less human interference and supports more automation.

But the misconception that hyperscale storage is confined to big shot infrastructures still remains. Answer to this is that it is suitable for big repositories, cloud computing, social media networks and even for government agencies.

The horizontal approach to scaling-

What is the first thing that clicks our mind, when we hear the word “scaling”?

It is something related to adding up data to a robust system. It does not depend on whether it is related to cloud, big data or storage. Hence, with hyperscale it’s not just any scaling, it’s scaling to a whole new level.

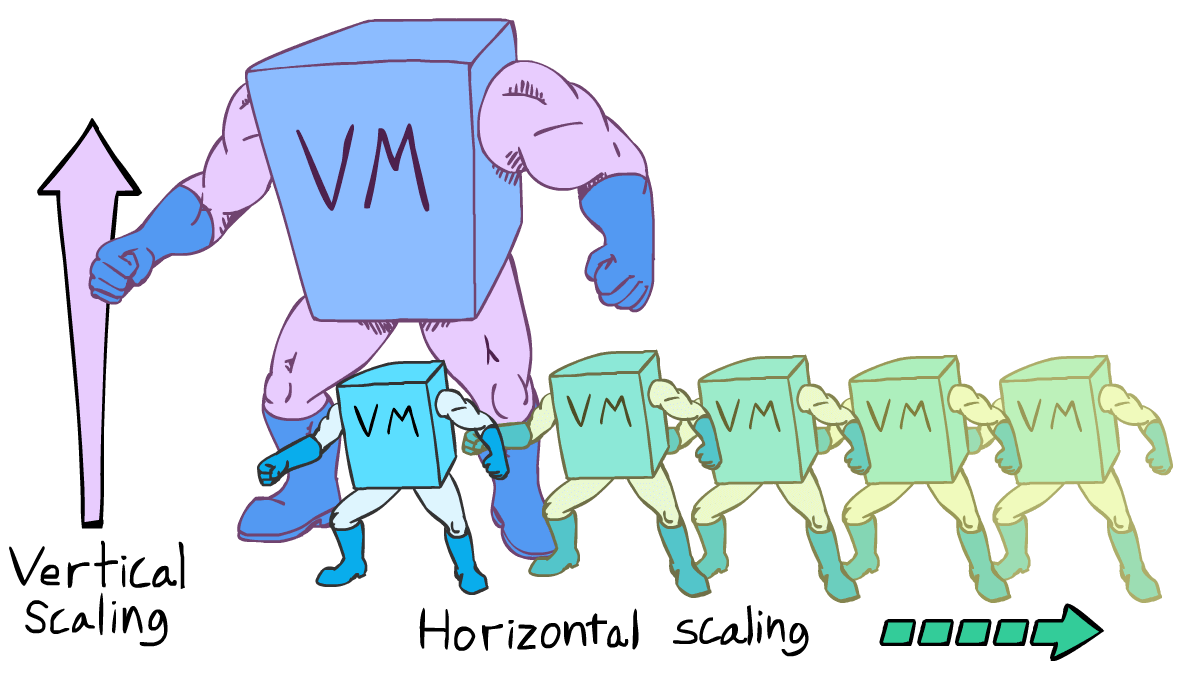

Instead of stacking up power on the existing machines, horizontal approach of scaling involves adding machines and thus scaling out…

But when it comes to scaling it is always thought as “scaling up” which is the vertical approach to scaling the infrastructure. This vertical approach involves adding up power to the existing machines. But there is an alternate way of scaling which is “scaling out”. This is the horizontal approach to scaling in which the overall machine is added to the computing environment.

Generally hyperscaling is done in three forms-

- In the physical infrastructure

- Scaling computing tasks

- Scaling financial resources

Hyperscale data center vs smaller data centers

There is the technology behind why smaller data centers cannot adopt the configurations and computing level of a hyperscale data center. With hyperscale you get open-source data center fabrics and migration to an incomparable faster network. Also, it’s not all about technology, it’s also about ROI (Return on Investment). Maintaining and invigorating on-premise small data centers would cost more and such a hefty task. Here comes the hyperscale data centers that are outsourced and this goes away all the worries of the costs and has increased the demands for a hyperscale data center.

Hyperscale data centers are very much different from ordinary data centers. They support networks that can deliver unprecedented speed and can meet the bandwidth demands as and when required. When there is a massive IT budget, most of the manual processes tend to be replaced by application integration. Hence, hyperscale data centers automate servers and connections are made even stronger.

With the increasing demand for internet, data has been raised exponentially in factors of 100. To support this level of growth can only be supported by hyperscale data centers. This increase in data is not going on hold very soon. Therefore, the industry should be ready with more and more hyperscale data centers.

An inside look of a hyperscale data center

There are a set of people who still doubt the security of public cloud or private cloud. But once you will get an inside look of a hyperscale data center it would be difficult for anyone to doubt any further. So basically these data centers are built in places with low humidity, as humidity can be one of the biggest reasons for any kind of failure. Also, the cooling factor is kept in mind while selecting the location of the data center. Places that may not require more cooling would be a very good cost savior. Every hyperscale data center should have good underwater data cables. A good amount of investment is demanded in this.

The security system in any of a data center is doubly walled with entry restricted only to the authenticated employees. A typical hyperscale data center is not just the other traditional data center overloaded with racks and servers. As the power density improves in hyperscale storage rows of racks can be minimized and thus makes it look less populated. The power budget and servers are more but how they are arranged is unlike any of the conventional data centers.

Proper insulation is maintained using industrial refrigeration installations.

With the help of the swamp coolers the external natural cooling air can be easily used inside a hyperscale data center to cool them down to the required temperature…

But before this, a large amount of money was spent on maintaining the cool temperature. Large IT infrastructures realized that a good amount can be cut out of the cooling costs by utilizing the external cooling. Then they came up with the idea of “Swamp Coolers”. This is the best form of external cooling in which water is sprayed in front of the fans which spreads the cool air and keeps the rooms of the data centers cooled down to a great level.

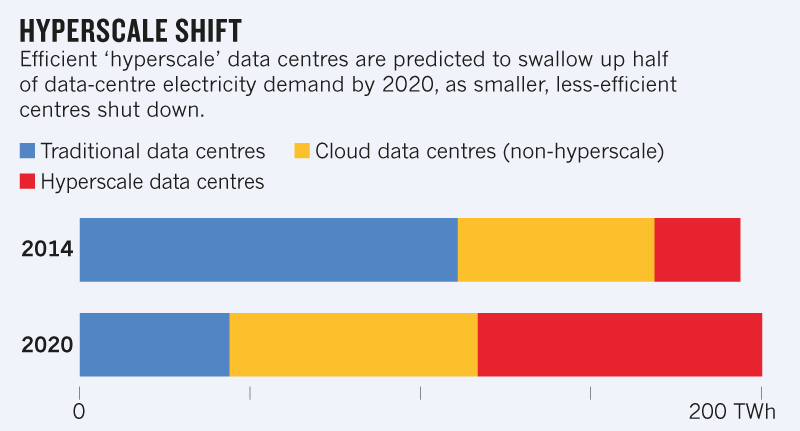

Hyperscale data centers have reduced the electrical expenditures which were made mostly in maintaining cooling agents and populated racks…

Also, all the hyperscale data centers are known for employing fewer experts. The hyperscale data center is made hyperscale through magnitude and complexity and not security deployed. The workloads are made redundant and not the power and reducing the electrical costs. The workloads are balanced throughout all the servers. These workloads are so arranged that they also contribute to temperature contribution.

All this has redefined the definition of the data center. Now every normal to big shot IT infrastructure can derive the efficiency through computing activity of a hyperscale data center. The elasticity and adaptability maintained within the most secured cloud on-premises and simultaneously at such lowered cost are what the future of IT demands and should get i.e. hyperscale computing.

Read More at : Building a Backup Strategy: Using Cloud And On-Premises For Best Data Backup Strategies

Live Chat

Live Chat

Thanks for your information ,we are also providing Hadoop online training in our institute nareshit. By joining in this course you will get complete knowledge about Hadoop. we are having the best and very well experienced faculty to train you. we are having the lab faculty and we are conducting many drives to place our students in good companies.we are giving 100% guidence for placement.