Table of Content

There are a number of factors to consider when setting up a server; performance, reliability scalability and control being the most widely known factors. Depending upon what you do and what you want from your server, you can setup your server in a number of ways.

Each setup has its own pros and cons and will cater specifically to a certain user. So, depending upon your needs, you can setup your server in different ways.

Given the problems encountered by users in setting up their compute environment, we thought of writing a blog to explain the various combinations.

So continue reading…

-

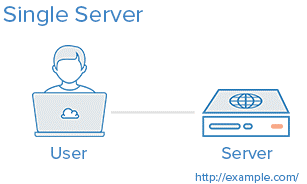

One server for all the tasks

In this arrangement you will have a single server which will take care of everything needed to run your applications efficiently. This means, your database and application will share resources of the same machine.

Where to use?

Such servers are best for setting up applications quickly on your server. Which is to say, the web server, application server and database server are on one machine. The only downside of this setup is that it offers little scalability and component isolation is nearly non-existent.

Benefits –

It is one of the simplest systems to make

Cons: application and database will have conflicts while contending for resources of the same server.

This in turn would yield poor performance and high application lag.

The system isn’t readily scalable.

-

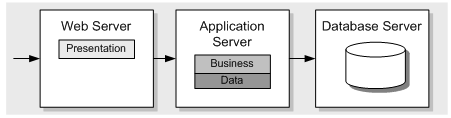

Separate Database & Application Servers

Both database and application can have a separate server so there is no resource conflict because the two are technically separate on different machines.

The security with separate database server is also enhanced because you can deploy multiple security points at each place.

Use Case: It is great for quickly setting up an application without compromising on quality because the application and database are never fighting over the same resource.

Pros:

Apps and database are on two different servers so do not contest for resources i.e. they have separate resource to use.

You can scale each tier separately. Means, it is possible to scale database server by adding more resources without needing to make changes to the application server.

Because DB and Applications are on different space, the security is a lot better because any hack attempt would need twice the effort to break into the system.

Cons:

Maintaining such a setup is quite complex than maintaining a single server.

Issues can arise if the network connection between the DB and Application server is experiencing high latency rate. Common causes include, too large a distance between DB and application server, low bandwidth.

-

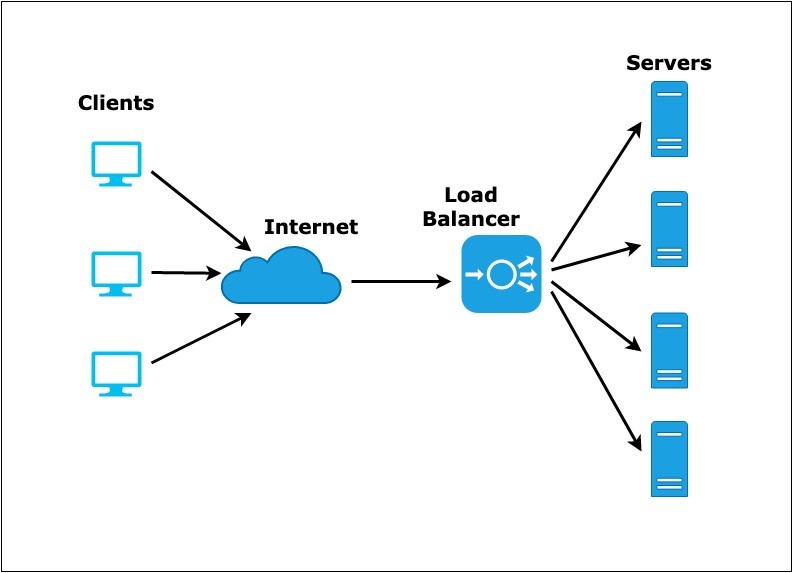

Load Balancer (Reverse Proxy)

Load balancers, as the name suggests, balances workloads across the servers for the sake of improving reliability by distributing the workload uniformly across multiple servers. If even one of the servers connected to the load balancer fails, other servers can efficiently handle the incoming until the fault has been rectified or the defaulted server is replaced.

Load balancers are best known for handling several applications through the same domain and port.

Best used in:

Environment that needs frequent scaling of resources through the addition of more and more servers. This is the case with organizations that have varying needs that varies due to customer demands. If you own one of such enterprises, you are bound to benefit from a load balancer setup.

Pros:

Horizontal scaling is possible. The system can be added with any number of server.

DDOS attacks are very less likely to happen because the number of client connections can be limited to a sensible amount.

Cons:

The load balancer, instead of augmenting performance, can easily become a bottleneck if the same if not configured properly.

Such systems are the most tedious to configure because they are somewhat complex.

The entire system is dependent on the load balancer. It is also the single point of failure. It the balancer goes down, you would have complete outage on your server. It is recommended to deploy multiple balancers for added redundancies.

-

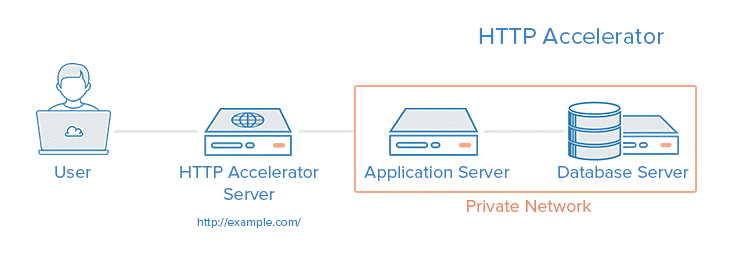

HTTP Accelerator (Reverse Proxy with Caching)

HTTP accelerator is also knows as caching HTTP reverse proxy and is issued to reduce the amount of time taken to server files and other content to the users through an array of techniques.

The main technique deployed with the accelerator is caching of response from a web or app server in the memory. If you are a novice, caching creates a copy of the files in nearby servers so that the same can be loaded faster when needed.

Best used in –

Environment with heavy dynamic web apps that have several common files that are used frequently and are better off cached.

Pros:

Increases site performance by reducing the amount of load on the CPU by caching content and thereby compressing resource.

It can also be used as reverse load balancer proxy

A few cache web applications can help safeguard your system against possible DDOS attacks.

Cons:

Needs a lot of fine tuning for best possible performance

Cache rate should be enough to maintain an ideal level of performance on the server

If the cache hits low, the performance could be reduced.

-

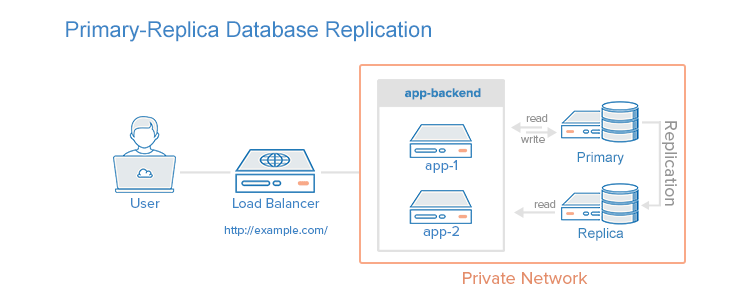

Primary-replica Database Replication

One of the most profound ways of improving database system is to implement primary replica database replication. Replication requires that one primary node be used with one or more replica nodes.

Best used in –

Database and web applications where the read/write performance is to be varied depending upon the needs.

Use Case:

Where the read performance of the database tier needs to be increased.

Pros:

Drastically improves database server’s read performance by spreading replicas across the servers

Can improve write performance by using the exclusive updates made available.

Cons:

The applications accessing the DB must have the system to identify which DB nodes should send the updates and read/write requests.

If the primary system fails, updates cannot be performed until the issue is corrected.

Failure of primary nodes drastically impact the failure of all the subsequent nodes.

Conclusion

Now that you know a lot about server setups, you must be having a pretty decent idea of the kind of setup you would use for your own apps. Remember that the more you test, the better you will be able to run your compute environment without too many complexities.

Live Chat

Live Chat